搜索到

12

篇与

的结果

-

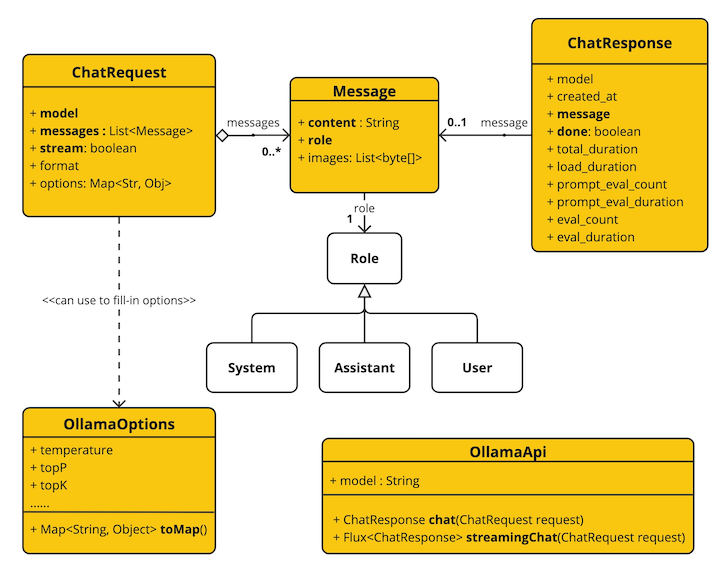

Spring AI来了 Spring AI来了Spring AI 项目旨在简化包含人工智能功能的应用程序的开发,避免不必要的复杂性。该项目从著名的 Python 项目(例如 LangChain 和 LlamaIndex)中汲取灵感,但 Spring AI 并不是这些项目的直接移植。 该项目的成立相信下一波生成式人工智能应用程序不仅适用于 Python 开发人员,而且将在许多编程语言中普遍存在。Spring AI 的核心提供了抽象,作为开发 AI 应用程序的基础。 这些抽象有多种实现,可以通过最少的代码更改轻松进行组件交换。一、Spring AI 提供以下功能Support for all major Model providers such as OpenAI, Microsoft, Amazon, Google, and Huggingface.(支持所有主要模型提供商,例如 OpenAI、Microsoft、Amazon、Google 和 Huggingface)Supported Model types are Chat and Text to Image with more on the way.(支持的模型类型包括“聊天”和“文本到图像”,还有更多模型类型正在开发中)Portable API across AI providers for Chat and for Embedding models. Both synchronous and stream API options are supported. Dropping down to access model specific features is also supported.(跨 AI 提供商的可移植 API,用于聊天和嵌入模型。 支持同步和流 API 选项。 还支持下拉访问模型特定功能)Mapping of AI Model output to POJOs.(AI 模型输出到 POJO 的映射)Support for all major Vector Database providers such as Azure Vector Search, Chroma, Milvus, Neo4j, PostgreSQL/PGVector, PineCone, Qdrant, Redis, and Weaviate(支持所有主要矢量数据库提供商,例如 Azure 矢量搜索、Chroma、Milvus、Neo4j、PostgreSQL/PGVector、PineCone、Qdrant、Redis 和 Weaviate)Portable API across Vector Store providers, including a novel SQL-like metadata filter API that is also portable.(跨 Vector Store 提供商的可移植 API,包括同样可移植的新颖的类似 SQL 的元数据过滤器 API)Function calling(函数调用)Spring Boot Auto Configuration and Starters for AI Models and Vector Stores.(AI 模型和向量存储的 Spring Boot 自动配置和启动器)ETL framework for Data Engineering(数据工程的 ETL 框架)二、俗语介绍ModelsModels模型是旨在处理和生成信息的算法,通常模仿人类的认知功能。 通过从大型数据集中学习模式和见解,这些模型可以做出预测、文本、图像或其他输出,从而增强跨行业的各种应用程序。有许多不同类型的人工智能模型,每种模型都适合特定的用例。 虽然 ChatGPT 及其生成式人工智能功能通过文本输入和输出吸引了用户,但许多模型和公司提供了多样化的输入和输出。 在 ChatGPT 之前,很多人对 Midjourney 和 Stable Diffusion 等文本到图像生成模型着迷。下表根据输入和输出类型对几种模型进行了分类:InputOutputExamplesLanguage/Code/Images (Multi-Modal)Language/CodeGPT4 - OpenAI, Google GeminiLanguage/CodeLanguage/CodeGPT 3.5 - OpenAI-Azure OpenAI, Google Bard, Meta LlamaLanguageImageDall-E - OpenAI + Azure, Deep AILanguage/ImageImageMidjourney, Stable Diffusion, RunwayMLTextNumbersMany (AKA embeddings)PromptsPrompts是基于语言的输入的基础,指导人工智能模型产生特定的输出。 对于熟悉 ChatGPT 的人来说,提示可能看起来只是发送到 API 的对话框中输入的文本。 然而,它包含的内容远不止于此。 在许多 AI 模型中,提示文本不仅仅是一个简单的字符串。ChatGPT 的 API 在Prompts中具有多个文本输入,每个文本输入都被分配一个角色。 例如,系统角色告诉模型如何行为并设置交互的上下文。 还有用户角色,通常是来自用户的输入。简单来说就是使用LLM等模型时,Prompts是输入,Prompts中输入内容被分配成不同的角色,每个角色控制不同的功能。Prompts的制定就成为重要的输入,可以共享,多人制定。Prompt Templates创建有效的提示涉及建立请求的上下文并用特定于用户输入的值替换部分请求。此过程使用传统的基于文本的模板引擎进行提示创建和管理。 Spring AI 为此使用 OSS 库 StringTemplate。Prompt Templates输入Prompts的模板,在Spring AI中,Prompt Templates可以比作Spring MVC架构中的“视图”。 提供模型对象(通常是 java.util.Map)来填充模板内的占位符。 “‘rendered’”字符串成为提供给 AI 模型的提示内容。EmbeddingsEmbeddings将文本转换为数值数组或向量,使人工智能模型能够处理和解释语言数据。 这种从文本到数字的转换是人工智能如何与人类语言交互并理解人类语言的关键要素。 作为探索人工智能的 Java 开发人员,没有必要理解复杂的数学理论或这些向量表示背后的具体实现。 对它们在人工智能系统中的角色和功能有基本的了解就足够了,特别是当您将人工智能功能集成到应用程序中时。嵌入在实际应用中尤其重要,例如检索增强生成(RAG)模式。 它们能够将数据表示为语义空间中的点,该语义空间类似于欧几里得几何的二维空间,但维度更高。 这意味着就像欧几里得几何中平面上的点根据其坐标可以接近或远离一样,在语义空间中,点的接近反映了含义的相似性。 关于相似主题的句子在这个多维空间中位置更近,就像图表上彼此靠近的点一样。 这种接近性有助于文本分类、语义搜索甚至产品推荐等任务,因为它允许人工智能根据相关概念在扩展语义环境中的“位置”来辨别和分组。您可以将这个语义空间视为一个向量。Output ParsingAI 模型的输出传统上以 java.lang.String 形式到达,即使您要求回复采用 JSON 格式。 它可能是正确的 JSON,但它不是 JSON 数据结构。 它只是一个字符串。 此外,在提示中询问“for JSON”并不是 100% 准确。这种复杂性导致了一个专门领域的出现,涉及创建提示以产生预期的输出,然后将生成的简单字符串解析为可用的数据结构以进行应用程序集成。输出解析采用精心设计的提示,通常需要与模型进行多次交互才能实现所需的格式。这一挑战促使 OpenAI 引入“OpenAI 函数”作为精确指定模型所需输出格式的方法。三、Bringing Your Data to the AI model(将您的数据引入人工智能模型)存在三种技术可用于自定义 AI 模型以合并您的数据:微调:这种传统的机器学习技术涉及定制模型并更改其内部权重。 然而,对于机器学习专家来说,这是一个具有挑战性的过程,而且对于 GPT 等模型来说,由于其规模,资源极其密集。 此外,某些型号可能不提供此选项。提示填充:更实用的替代方案是将数据嵌入到提供给模型的提示中。 考虑到模型的令牌限制,需要技术来在模型的上下文窗口中呈现相关数据。 这种方法通俗地称为“填充提示”。 Spring AI 库可帮助您实现基于“填充提示”技术(也称为检索增强生成 (RAG))的解决方案。函数调用:此技术允许注册自定义用户函数,将大型语言模型连接到外部系统的 API。 Spring AI 极大地简化了支持函数调用所需编写的代码。四、其它说明Retrieval Augmented Generation一种名为检索增强生成 (RAG) 的技术已经出现,旨在解决将相关数据纳入提示中以实现准确的 AI 模型响应的挑战。该方法涉及批处理风格的编程模型,其中作业从文档中读取非结构化数据,对其进行转换,然后将其写入矢量数据库。 从较高层面来看,这是一个 ETL(提取、转换和加载)管道。 RAG技术的检索部分使用向量数据库。作为将非结构化数据加载到矢量数据库的一部分,最重要的转换之一是将原始文档分割成更小的部分。 将原始文档分割成更小的部分的过程有两个重要步骤:将文档拆分为多个部分,同时保留内容的语义边界。 例如,对于包含段落和表格的文档,应避免在段落或表格的中间拆分文档。 对于代码,避免在方法实现的中间拆分代码。将文档的各个部分进一步拆分为大小仅为 AI 模型令牌限制的一小部分的部分。RAG 的下一阶段是处理用户输入。 当人工智能模型要回答用户的问题时,该问题和所有“相似”文档片段都会被放入发送给人工智能模型的提示中。 这就是使用矢量数据库的原因。 它非常擅长查找相似内容。实现 RAG 时使用了几个概念。 这些概念映射到 Spring AI 中的类:DocumentReader:一个 Java 函数接口,负责从数据源加载 List。 常见的数据源有 PDF、Markdown 和 JSON。Document:数据源的基于文本的表示形式,还包含用于描述内容的元数据。DocumentTransformer:负责以各种方式处理数据(例如,将文档分割成更小的部分或向文档添加额外的元数据)。DocumentWriter:允许您将文档保存到数据库中(最常见的是在 AI 堆栈中,矢量数据库)。Embedding:将数据表示为 List,向量数据库使用它来计算用户查询与相关文档的“相似度”。Function Calling大型语言模型(LLM)在训练后被冻结,导致知识过时,并且无法访问或修改外部数据。函数调用机制解决了这些缺点。 它允许您注册自定义用户函数,将大型语言模型连接到外部系统的 API。 这些系统可以为法学硕士提供实时数据并代表他们执行数据处理操作。Spring AI 极大地简化了支持函数调用所需编写的代码。 它为您代理函数调用对话。 您可以将函数作为 @Bean 提供,然后在提示选项中提供该函数的 bean 名称以激活该函数。 您还可以在单个提示中定义和引用多个函数。Evaluating AI responses有效评估人工智能系统响应用户请求的输出对于确保最终应用的准确性和有用性非常重要。 一些新兴技术可以使用预训练模型本身来实现此目的。此评估过程涉及分析生成的响应是否符合用户的意图和查询的上下文。 相关性、连贯性和事实正确性等指标用于衡量人工智能生成的响应的质量。一种方法涉及呈现用户的请求和人工智能模型对模型的响应,查询响应是否与提供的数据一致。此外,利用矢量数据库中存储的信息作为补充数据可以增强评估过程,有助于确定响应相关性。Spring AI 项目当前提供了一些非常基本的示例,说明如何以提示的形式评估响应以包含在 JUnit 测试中。五、功能说明Embeddings ModelsEmbeddings APISpring AI OpenAI EmbeddingsSpring AI Azure OpenAI EmbeddingsSpring AI Ollama EmbeddingsSpring AI Transformers (ONNX) EmbeddingsSpring AI PostgresML EmbeddingsSpring AI Bedrock Cohere EmbeddingsSpring AI Bedrock Titan EmbeddingsSpring AI VertexAI EmbeddingsSpring AI MistralAI EmbeddingsChat ModelsChat Completion APIOpenAI Chat Completion (streaming and function-calling support)Microsoft Azure Open AI Chat Completion (streaming and function-calling support)Ollama Chat CompletionHuggingFace Chat Completion (no streaming support)Google Vertex AI PaLM2 Chat Completion (no streaming support)Google Vertex AI Gemini Chat Completion (streaming, multi-modality & function-calling support)Amazon BedrockCohere Chat CompletionLlama2 Chat CompletionTitan Chat CompletionAnthropic Chat CompletionMistralAI Chat Completion (streaming and function-calling support)Image Generation ModelsImage Generation APIOpenAI Image GenerationStabilityAI Image GenerationVector DatabasesVector Database APIAzure Vector Search - The Azure vector store.ChromaVectorStore - The Chroma vector store.MilvusVectorStore - The Milvus vector store.Neo4jVectorStore - The Neo4j vector store.PgVectorStore - The PostgreSQL/PGVector vector store.PineconeVectorStore - PineCone vector store.QdrantVectorStore - Qdrant vector store.RedisVectorStore - The Redis vector store.WeaviateVectorStore - The Weaviate vector store.SimpleVectorStore - A simple (in-memory) implementation of persistent vector storage, good for educational purposes.六、springboot ai接入Ollama Chat这里以Chat Models为例,创建一个springboot ai项目。说明:由于Openai api key收费,这里以Ollama Chat为LLM演示。本地搭建Ollama Chat下载Ollama https://ollama.com/download/OllamaSetup.exe拉取运行ollama run llama2如果想支持中文拉取这个ollama pull llama2-chinese执行ollama run llama2-chineseollama默认服务地址端口http://localhost:11434/搭建springboot ai项目说明:对JDK有版本要求,最低JDK17.maven:3.8.3JDK:172、引入依赖<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>3.2.4</version> <relativePath/> <!-- lookup parent from repository --> </parent> <groupId>com.example.ai</groupId> <artifactId>ollama-chat</artifactId> <version>0.0.1-SNAPSHOT</version> <name>ollama-chat</name> <description>Ollama Chat project for Spring Boot</description> <properties> <java.version>17</java.version> </properties> <dependencies> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> <dependency> <groupId>org.springframework.ai</groupId> <artifactId>spring-ai-ollama-spring-boot-starter</artifactId> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> <repositories> <repository> <id>spring-milestones</id> <name>Spring Milestones</name> <url>https://repo.spring.io/milestone</url> <snapshots> <enabled>false</enabled> </snapshots> </repository> <repository> <id>spring-snapshots</id> <name>Spring Snapshots</name> <url>https://repo.spring.io/snapshot</url> <releases> <enabled>false</enabled> </releases> </repository> </repositories> <dependencyManagement> <dependencies> <dependency> <groupId>org.springframework.ai</groupId> <artifactId>spring-ai-bom</artifactId> <version>1.0.0-SNAPSHOT</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies> </dependencyManagement> </project> 编写Controllerpackage com.example.ai.ollamachat.controller; import org.springframework.ai.chat.ChatResponse; import org.springframework.ai.chat.messages.UserMessage; import org.springframework.ai.chat.prompt.Prompt; import org.springframework.ai.ollama.OllamaChatClient; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.RequestParam; import org.springframework.web.bind.annotation.RestController; import reactor.core.publisher.Flux; import java.util.Map; @RestController public class ChatController { private final OllamaChatClient chatClient; @Autowired public ChatController(OllamaChatClient chatClient) { this.chatClient = chatClient; } @GetMapping("/ai/generate") public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) { return Map.of("generation", chatClient.call(message)); } @GetMapping("/ai/generateStream") public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) { Prompt prompt = new Prompt(new UserMessage(message)); return chatClient.stream(prompt); } }application.properties配置spring.application.name=ollama-chat spring.ai.ollama.base-url=http://localhost:11434/ spring.ai.ollama.chat.enabled=true spring.ai.ollama.chat.options.model=llama2 spring.ai.ollama.chat.options.temperature=0.7更多参数配置见文档 https://docs.spring.io/spring-ai/reference/api/clients/ollama-chat.html测试访问http://localhost:8080/ai/generate返回结果{"generation":"\nWhy don't scientists trust atoms? Because they make up everything! \uD83D\uDE02"}说明OllamaApi 聊天接口和构建块图:更多其它LLM接入,后续更新。

Spring AI来了 Spring AI来了Spring AI 项目旨在简化包含人工智能功能的应用程序的开发,避免不必要的复杂性。该项目从著名的 Python 项目(例如 LangChain 和 LlamaIndex)中汲取灵感,但 Spring AI 并不是这些项目的直接移植。 该项目的成立相信下一波生成式人工智能应用程序不仅适用于 Python 开发人员,而且将在许多编程语言中普遍存在。Spring AI 的核心提供了抽象,作为开发 AI 应用程序的基础。 这些抽象有多种实现,可以通过最少的代码更改轻松进行组件交换。一、Spring AI 提供以下功能Support for all major Model providers such as OpenAI, Microsoft, Amazon, Google, and Huggingface.(支持所有主要模型提供商,例如 OpenAI、Microsoft、Amazon、Google 和 Huggingface)Supported Model types are Chat and Text to Image with more on the way.(支持的模型类型包括“聊天”和“文本到图像”,还有更多模型类型正在开发中)Portable API across AI providers for Chat and for Embedding models. Both synchronous and stream API options are supported. Dropping down to access model specific features is also supported.(跨 AI 提供商的可移植 API,用于聊天和嵌入模型。 支持同步和流 API 选项。 还支持下拉访问模型特定功能)Mapping of AI Model output to POJOs.(AI 模型输出到 POJO 的映射)Support for all major Vector Database providers such as Azure Vector Search, Chroma, Milvus, Neo4j, PostgreSQL/PGVector, PineCone, Qdrant, Redis, and Weaviate(支持所有主要矢量数据库提供商,例如 Azure 矢量搜索、Chroma、Milvus、Neo4j、PostgreSQL/PGVector、PineCone、Qdrant、Redis 和 Weaviate)Portable API across Vector Store providers, including a novel SQL-like metadata filter API that is also portable.(跨 Vector Store 提供商的可移植 API,包括同样可移植的新颖的类似 SQL 的元数据过滤器 API)Function calling(函数调用)Spring Boot Auto Configuration and Starters for AI Models and Vector Stores.(AI 模型和向量存储的 Spring Boot 自动配置和启动器)ETL framework for Data Engineering(数据工程的 ETL 框架)二、俗语介绍ModelsModels模型是旨在处理和生成信息的算法,通常模仿人类的认知功能。 通过从大型数据集中学习模式和见解,这些模型可以做出预测、文本、图像或其他输出,从而增强跨行业的各种应用程序。有许多不同类型的人工智能模型,每种模型都适合特定的用例。 虽然 ChatGPT 及其生成式人工智能功能通过文本输入和输出吸引了用户,但许多模型和公司提供了多样化的输入和输出。 在 ChatGPT 之前,很多人对 Midjourney 和 Stable Diffusion 等文本到图像生成模型着迷。下表根据输入和输出类型对几种模型进行了分类:InputOutputExamplesLanguage/Code/Images (Multi-Modal)Language/CodeGPT4 - OpenAI, Google GeminiLanguage/CodeLanguage/CodeGPT 3.5 - OpenAI-Azure OpenAI, Google Bard, Meta LlamaLanguageImageDall-E - OpenAI + Azure, Deep AILanguage/ImageImageMidjourney, Stable Diffusion, RunwayMLTextNumbersMany (AKA embeddings)PromptsPrompts是基于语言的输入的基础,指导人工智能模型产生特定的输出。 对于熟悉 ChatGPT 的人来说,提示可能看起来只是发送到 API 的对话框中输入的文本。 然而,它包含的内容远不止于此。 在许多 AI 模型中,提示文本不仅仅是一个简单的字符串。ChatGPT 的 API 在Prompts中具有多个文本输入,每个文本输入都被分配一个角色。 例如,系统角色告诉模型如何行为并设置交互的上下文。 还有用户角色,通常是来自用户的输入。简单来说就是使用LLM等模型时,Prompts是输入,Prompts中输入内容被分配成不同的角色,每个角色控制不同的功能。Prompts的制定就成为重要的输入,可以共享,多人制定。Prompt Templates创建有效的提示涉及建立请求的上下文并用特定于用户输入的值替换部分请求。此过程使用传统的基于文本的模板引擎进行提示创建和管理。 Spring AI 为此使用 OSS 库 StringTemplate。Prompt Templates输入Prompts的模板,在Spring AI中,Prompt Templates可以比作Spring MVC架构中的“视图”。 提供模型对象(通常是 java.util.Map)来填充模板内的占位符。 “‘rendered’”字符串成为提供给 AI 模型的提示内容。EmbeddingsEmbeddings将文本转换为数值数组或向量,使人工智能模型能够处理和解释语言数据。 这种从文本到数字的转换是人工智能如何与人类语言交互并理解人类语言的关键要素。 作为探索人工智能的 Java 开发人员,没有必要理解复杂的数学理论或这些向量表示背后的具体实现。 对它们在人工智能系统中的角色和功能有基本的了解就足够了,特别是当您将人工智能功能集成到应用程序中时。嵌入在实际应用中尤其重要,例如检索增强生成(RAG)模式。 它们能够将数据表示为语义空间中的点,该语义空间类似于欧几里得几何的二维空间,但维度更高。 这意味着就像欧几里得几何中平面上的点根据其坐标可以接近或远离一样,在语义空间中,点的接近反映了含义的相似性。 关于相似主题的句子在这个多维空间中位置更近,就像图表上彼此靠近的点一样。 这种接近性有助于文本分类、语义搜索甚至产品推荐等任务,因为它允许人工智能根据相关概念在扩展语义环境中的“位置”来辨别和分组。您可以将这个语义空间视为一个向量。Output ParsingAI 模型的输出传统上以 java.lang.String 形式到达,即使您要求回复采用 JSON 格式。 它可能是正确的 JSON,但它不是 JSON 数据结构。 它只是一个字符串。 此外,在提示中询问“for JSON”并不是 100% 准确。这种复杂性导致了一个专门领域的出现,涉及创建提示以产生预期的输出,然后将生成的简单字符串解析为可用的数据结构以进行应用程序集成。输出解析采用精心设计的提示,通常需要与模型进行多次交互才能实现所需的格式。这一挑战促使 OpenAI 引入“OpenAI 函数”作为精确指定模型所需输出格式的方法。三、Bringing Your Data to the AI model(将您的数据引入人工智能模型)存在三种技术可用于自定义 AI 模型以合并您的数据:微调:这种传统的机器学习技术涉及定制模型并更改其内部权重。 然而,对于机器学习专家来说,这是一个具有挑战性的过程,而且对于 GPT 等模型来说,由于其规模,资源极其密集。 此外,某些型号可能不提供此选项。提示填充:更实用的替代方案是将数据嵌入到提供给模型的提示中。 考虑到模型的令牌限制,需要技术来在模型的上下文窗口中呈现相关数据。 这种方法通俗地称为“填充提示”。 Spring AI 库可帮助您实现基于“填充提示”技术(也称为检索增强生成 (RAG))的解决方案。函数调用:此技术允许注册自定义用户函数,将大型语言模型连接到外部系统的 API。 Spring AI 极大地简化了支持函数调用所需编写的代码。四、其它说明Retrieval Augmented Generation一种名为检索增强生成 (RAG) 的技术已经出现,旨在解决将相关数据纳入提示中以实现准确的 AI 模型响应的挑战。该方法涉及批处理风格的编程模型,其中作业从文档中读取非结构化数据,对其进行转换,然后将其写入矢量数据库。 从较高层面来看,这是一个 ETL(提取、转换和加载)管道。 RAG技术的检索部分使用向量数据库。作为将非结构化数据加载到矢量数据库的一部分,最重要的转换之一是将原始文档分割成更小的部分。 将原始文档分割成更小的部分的过程有两个重要步骤:将文档拆分为多个部分,同时保留内容的语义边界。 例如,对于包含段落和表格的文档,应避免在段落或表格的中间拆分文档。 对于代码,避免在方法实现的中间拆分代码。将文档的各个部分进一步拆分为大小仅为 AI 模型令牌限制的一小部分的部分。RAG 的下一阶段是处理用户输入。 当人工智能模型要回答用户的问题时,该问题和所有“相似”文档片段都会被放入发送给人工智能模型的提示中。 这就是使用矢量数据库的原因。 它非常擅长查找相似内容。实现 RAG 时使用了几个概念。 这些概念映射到 Spring AI 中的类:DocumentReader:一个 Java 函数接口,负责从数据源加载 List。 常见的数据源有 PDF、Markdown 和 JSON。Document:数据源的基于文本的表示形式,还包含用于描述内容的元数据。DocumentTransformer:负责以各种方式处理数据(例如,将文档分割成更小的部分或向文档添加额外的元数据)。DocumentWriter:允许您将文档保存到数据库中(最常见的是在 AI 堆栈中,矢量数据库)。Embedding:将数据表示为 List,向量数据库使用它来计算用户查询与相关文档的“相似度”。Function Calling大型语言模型(LLM)在训练后被冻结,导致知识过时,并且无法访问或修改外部数据。函数调用机制解决了这些缺点。 它允许您注册自定义用户函数,将大型语言模型连接到外部系统的 API。 这些系统可以为法学硕士提供实时数据并代表他们执行数据处理操作。Spring AI 极大地简化了支持函数调用所需编写的代码。 它为您代理函数调用对话。 您可以将函数作为 @Bean 提供,然后在提示选项中提供该函数的 bean 名称以激活该函数。 您还可以在单个提示中定义和引用多个函数。Evaluating AI responses有效评估人工智能系统响应用户请求的输出对于确保最终应用的准确性和有用性非常重要。 一些新兴技术可以使用预训练模型本身来实现此目的。此评估过程涉及分析生成的响应是否符合用户的意图和查询的上下文。 相关性、连贯性和事实正确性等指标用于衡量人工智能生成的响应的质量。一种方法涉及呈现用户的请求和人工智能模型对模型的响应,查询响应是否与提供的数据一致。此外,利用矢量数据库中存储的信息作为补充数据可以增强评估过程,有助于确定响应相关性。Spring AI 项目当前提供了一些非常基本的示例,说明如何以提示的形式评估响应以包含在 JUnit 测试中。五、功能说明Embeddings ModelsEmbeddings APISpring AI OpenAI EmbeddingsSpring AI Azure OpenAI EmbeddingsSpring AI Ollama EmbeddingsSpring AI Transformers (ONNX) EmbeddingsSpring AI PostgresML EmbeddingsSpring AI Bedrock Cohere EmbeddingsSpring AI Bedrock Titan EmbeddingsSpring AI VertexAI EmbeddingsSpring AI MistralAI EmbeddingsChat ModelsChat Completion APIOpenAI Chat Completion (streaming and function-calling support)Microsoft Azure Open AI Chat Completion (streaming and function-calling support)Ollama Chat CompletionHuggingFace Chat Completion (no streaming support)Google Vertex AI PaLM2 Chat Completion (no streaming support)Google Vertex AI Gemini Chat Completion (streaming, multi-modality & function-calling support)Amazon BedrockCohere Chat CompletionLlama2 Chat CompletionTitan Chat CompletionAnthropic Chat CompletionMistralAI Chat Completion (streaming and function-calling support)Image Generation ModelsImage Generation APIOpenAI Image GenerationStabilityAI Image GenerationVector DatabasesVector Database APIAzure Vector Search - The Azure vector store.ChromaVectorStore - The Chroma vector store.MilvusVectorStore - The Milvus vector store.Neo4jVectorStore - The Neo4j vector store.PgVectorStore - The PostgreSQL/PGVector vector store.PineconeVectorStore - PineCone vector store.QdrantVectorStore - Qdrant vector store.RedisVectorStore - The Redis vector store.WeaviateVectorStore - The Weaviate vector store.SimpleVectorStore - A simple (in-memory) implementation of persistent vector storage, good for educational purposes.六、springboot ai接入Ollama Chat这里以Chat Models为例,创建一个springboot ai项目。说明:由于Openai api key收费,这里以Ollama Chat为LLM演示。本地搭建Ollama Chat下载Ollama https://ollama.com/download/OllamaSetup.exe拉取运行ollama run llama2如果想支持中文拉取这个ollama pull llama2-chinese执行ollama run llama2-chineseollama默认服务地址端口http://localhost:11434/搭建springboot ai项目说明:对JDK有版本要求,最低JDK17.maven:3.8.3JDK:172、引入依赖<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>3.2.4</version> <relativePath/> <!-- lookup parent from repository --> </parent> <groupId>com.example.ai</groupId> <artifactId>ollama-chat</artifactId> <version>0.0.1-SNAPSHOT</version> <name>ollama-chat</name> <description>Ollama Chat project for Spring Boot</description> <properties> <java.version>17</java.version> </properties> <dependencies> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> <dependency> <groupId>org.springframework.ai</groupId> <artifactId>spring-ai-ollama-spring-boot-starter</artifactId> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> <repositories> <repository> <id>spring-milestones</id> <name>Spring Milestones</name> <url>https://repo.spring.io/milestone</url> <snapshots> <enabled>false</enabled> </snapshots> </repository> <repository> <id>spring-snapshots</id> <name>Spring Snapshots</name> <url>https://repo.spring.io/snapshot</url> <releases> <enabled>false</enabled> </releases> </repository> </repositories> <dependencyManagement> <dependencies> <dependency> <groupId>org.springframework.ai</groupId> <artifactId>spring-ai-bom</artifactId> <version>1.0.0-SNAPSHOT</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies> </dependencyManagement> </project> 编写Controllerpackage com.example.ai.ollamachat.controller; import org.springframework.ai.chat.ChatResponse; import org.springframework.ai.chat.messages.UserMessage; import org.springframework.ai.chat.prompt.Prompt; import org.springframework.ai.ollama.OllamaChatClient; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.RequestParam; import org.springframework.web.bind.annotation.RestController; import reactor.core.publisher.Flux; import java.util.Map; @RestController public class ChatController { private final OllamaChatClient chatClient; @Autowired public ChatController(OllamaChatClient chatClient) { this.chatClient = chatClient; } @GetMapping("/ai/generate") public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) { return Map.of("generation", chatClient.call(message)); } @GetMapping("/ai/generateStream") public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) { Prompt prompt = new Prompt(new UserMessage(message)); return chatClient.stream(prompt); } }application.properties配置spring.application.name=ollama-chat spring.ai.ollama.base-url=http://localhost:11434/ spring.ai.ollama.chat.enabled=true spring.ai.ollama.chat.options.model=llama2 spring.ai.ollama.chat.options.temperature=0.7更多参数配置见文档 https://docs.spring.io/spring-ai/reference/api/clients/ollama-chat.html测试访问http://localhost:8080/ai/generate返回结果{"generation":"\nWhy don't scientists trust atoms? Because they make up everything! \uD83D\uDE02"}说明OllamaApi 聊天接口和构建块图:更多其它LLM接入,后续更新。 -

Nacos配置管理、Feign远程调用、Gateway网关 SpringCloud实用篇020.学习目标1.Nacos配置管理Nacos除了可以做注册中心,同样可以做配置管理来使用。1.1.统一配置管理当微服务部署的实例越来越多,达到数十、数百时,逐个修改微服务配置就会让人抓狂,而且很容易出错。我们需要一种统一配置管理方案,可以集中管理所有实例的配置。Nacos一方面可以将配置集中管理,另一方可以在配置变更时,及时通知微服务,实现配置的热更新。1.1.1.在nacos中添加配置文件如何在nacos中管理配置呢?然后在弹出的表单中,填写配置信息:注意:项目的核心配置,需要热更新的配置才有放到nacos管理的必要。基本不会变更的一些配置还是保存在微服务本地比较好。1.1.2.从微服务拉取配置微服务要拉取nacos中管理的配置,并且与本地的application.yml配置合并,才能完成项目启动。但如果尚未读取application.yml,又如何得知nacos地址呢?因此spring引入了一种新的配置文件:bootstrap.yaml文件,会在application.yml之前被读取,流程如下:1)引入nacos-config依赖首先,在user-service服务中,引入nacos-config的客户端依赖:<!--nacos配置管理依赖--> <dependency> <groupId>com.alibaba.cloud</groupId> <artifactId>spring-cloud-starter-alibaba-nacos-config</artifactId> </dependency>2)添加bootstrap.yaml然后,在user-service中添加一个bootstrap.yaml文件,内容如下:spring: application: name: userservice # 服务名称 profiles: active: dev #开发环境,这里是dev cloud: nacos: server-addr: localhost:8848 # Nacos地址 config: file-extension: yaml # 文件后缀名这里会根据spring.cloud.nacos.server-addr获取nacos地址,再根据${spring.application.name}-${spring.profiles.active}.${spring.cloud.nacos.config.file-extension}作为文件id,来读取配置。本例中,就是去读取userservice-dev.yaml:3)读取nacos配置在user-service中的UserController中添加业务逻辑,读取pattern.dateformat配置:完整代码:package cn.itcast.user.web; import cn.itcast.user.pojo.User; import cn.itcast.user.service.UserService; import lombok.extern.slf4j.Slf4j; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.beans.factory.annotation.Value; import org.springframework.web.bind.annotation.*; import java.time.LocalDateTime; import java.time.format.DateTimeFormatter; @Slf4j @RestController @RequestMapping("/user") public class UserController { @Autowired private UserService userService; @Value("${pattern.dateformat}") private String dateformat; @GetMapping("now") public String now(){ return LocalDateTime.now().format(DateTimeFormatter.ofPattern(dateformat)); } // ...略 }在页面访问,可以看到效果:1.2.配置热更新我们最终的目的,是修改nacos中的配置后,微服务中无需重启即可让配置生效,也就是配置热更新。要实现配置热更新,可以使用两种方式:1.2.1.方式一在@Value注入的变量所在类上添加注解@RefreshScope:1.2.2.方式二使用@ConfigurationProperties注解代替@Value注解。在user-service服务中,添加一个类,读取patterrn.dateformat属性:package cn.itcast.user.config; import lombok.Data; import org.springframework.boot.context.properties.ConfigurationProperties; import org.springframework.stereotype.Component; @Component @Data @ConfigurationProperties(prefix = "pattern") public class PatternProperties { private String dateformat; }在UserController中使用这个类代替@Value:完整代码:package cn.itcast.user.web; import cn.itcast.user.config.PatternProperties; import cn.itcast.user.pojo.User; import cn.itcast.user.service.UserService; import lombok.extern.slf4j.Slf4j; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.PathVariable; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RestController; import java.time.LocalDateTime; import java.time.format.DateTimeFormatter; @Slf4j @RestController @RequestMapping("/user") public class UserController { @Autowired private UserService userService; @Autowired private PatternProperties patternProperties; @GetMapping("now") public String now(){ return LocalDateTime.now().format(DateTimeFormatter.ofPattern(patternProperties.getDateformat())); } // 略 }1.3.配置共享其实微服务启动时,会去nacos读取多个配置文件,例如:[spring.application.name]-[spring.profiles.active].yaml,例如:userservice-dev.yaml[spring.application.name].yaml,例如:userservice.yaml而[spring.application.name].yaml不包含环境,因此可以被多个环境共享。下面我们通过案例来测试配置共享1)添加一个环境共享配置我们在nacos中添加一个userservice.yaml文件:2)在user-service中读取共享配置在user-service服务中,修改PatternProperties类,读取新添加的属性:在user-service服务中,修改UserController,添加一个方法:3)运行两个UserApplication,使用不同的profile修改UserApplication2这个启动项,改变其profile值:这样,UserApplication(8081)使用的profile是dev,UserApplication2(8082)使用的profile是test。启动UserApplication和UserApplication2访问http://localhost:8081/user/prop,结果:访问http://localhost:8082/user/prop,结果:可以看出来,不管是dev,还是test环境,都读取到了envSharedValue这个属性的值。4)配置共享的优先级当nacos、服务本地同时出现相同属性时,优先级有高低之分:1.4.搭建Nacos集群Nacos生产环境下一定要部署为集群状态,部署方式参考课前资料中的文档:2.Feign远程调用先来看我们以前利用RestTemplate发起远程调用的代码:存在下面的问题:•代码可读性差,编程体验不统一•参数复杂URL难以维护Feign是一个声明式的http客户端,官方地址:https://github.com/OpenFeign/feign其作用就是帮助我们优雅的实现http请求的发送,解决上面提到的问题。2.1.Feign替代RestTemplateFegin的使用步骤如下:1)引入依赖我们在order-service服务的pom文件中引入feign的依赖:<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-openfeign</artifactId> </dependency>2)添加注解在order-service的启动类添加注解开启Feign的功能:3)编写Feign的客户端在order-service中新建一个接口,内容如下:package cn.itcast.order.client; import cn.itcast.order.pojo.User; import org.springframework.cloud.openfeign.FeignClient; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.PathVariable; @FeignClient("userservice") public interface UserClient { @GetMapping("/user/{id}") User findById(@PathVariable("id") Long id); }这个客户端主要是基于SpringMVC的注解来声明远程调用的信息,比如:服务名称:userservice请求方式:GET请求路径:/user/{id}请求参数:Long id返回值类型:User这样,Feign就可以帮助我们发送http请求,无需自己使用RestTemplate来发送了。4)测试修改order-service中的OrderService类中的queryOrderById方法,使用Feign客户端代替RestTemplate:是不是看起来优雅多了。5)总结使用Feign的步骤:① 引入依赖② 添加@EnableFeignClients注解③ 编写FeignClient接口④ 使用FeignClient中定义的方法代替RestTemplate2.2.自定义配置Feign可以支持很多的自定义配置,如下表所示:类型作用说明feign.Logger.Level修改日志级别包含四种不同的级别:NONE、BASIC、HEADERS、FULLfeign.codec.Decoder响应结果的解析器http远程调用的结果做解析,例如解析json字符串为java对象feign.codec.Encoder请求参数编码将请求参数编码,便于通过http请求发送feign. Contract支持的注解格式默认是SpringMVC的注解feign. Retryer失败重试机制请求失败的重试机制,默认是没有,不过会使用Ribbon的重试一般情况下,默认值就能满足我们使用,如果要自定义时,只需要创建自定义的@Bean覆盖默认Bean即可。下面以日志为例来演示如何自定义配置。2.2.1.配置文件方式基于配置文件修改feign的日志级别可以针对单个服务:feign: client: config: userservice: # 针对某个微服务的配置 loggerLevel: FULL # 日志级别 也可以针对所有服务:feign: client: config: default: # 这里用default就是全局配置,如果是写服务名称,则是针对某个微服务的配置 loggerLevel: FULL # 日志级别 而日志的级别分为四种:NONE:不记录任何日志信息,这是默认值。BASIC:仅记录请求的方法,URL以及响应状态码和执行时间HEADERS:在BASIC的基础上,额外记录了请求和响应的头信息FULL:记录所有请求和响应的明细,包括头信息、请求体、元数据。2.2.2.Java代码方式也可以基于Java代码来修改日志级别,先声明一个类,然后声明一个Logger.Level的对象:public class DefaultFeignConfiguration { @Bean public Logger.Level feignLogLevel(){ return Logger.Level.BASIC; // 日志级别为BASIC } }如果要全局生效,将其放到启动类的@EnableFeignClients这个注解中:@EnableFeignClients(defaultConfiguration = DefaultFeignConfiguration .class) 如果是局部生效,则把它放到对应的@FeignClient这个注解中:@FeignClient(value = "userservice", configuration = DefaultFeignConfiguration .class) 2.3.Feign使用优化Feign底层发起http请求,依赖于其它的框架。其底层客户端实现包括:•URLConnection:默认实现,不支持连接池•Apache HttpClient :支持连接池•OKHttp:支持连接池因此提高Feign的性能主要手段就是使用连接池代替默认的URLConnection。这里我们用Apache的HttpClient来演示。1)引入依赖在order-service的pom文件中引入Apache的HttpClient依赖:<!--httpClient的依赖 --> <dependency> <groupId>io.github.openfeign</groupId> <artifactId>feign-httpclient</artifactId> </dependency>2)配置连接池在order-service的application.yml中添加配置:feign: client: config: default: # default全局的配置 loggerLevel: BASIC # 日志级别,BASIC就是基本的请求和响应信息 httpclient: enabled: true # 开启feign对HttpClient的支持 max-connections: 200 # 最大的连接数 max-connections-per-route: 50 # 每个路径的最大连接数接下来,在FeignClientFactoryBean中的loadBalance方法中打断点:Debug方式启动order-service服务,可以看到这里的client,底层就是Apache HttpClient:总结,Feign的优化:1.日志级别尽量用basic2.使用HttpClient或OKHttp代替URLConnection① 引入feign-httpClient依赖② 配置文件开启httpClient功能,设置连接池参数2.4.最佳实践所谓最近实践,就是使用过程中总结的经验,最好的一种使用方式。自习观察可以发现,Feign的客户端与服务提供者的controller代码非常相似:feign客户端:UserController:有没有一种办法简化这种重复的代码编写呢?2.4.1.继承方式一样的代码可以通过继承来共享:1)定义一个API接口,利用定义方法,并基于SpringMVC注解做声明。2)Feign客户端和Controller都集成改接口优点:简单实现了代码共享缺点:服务提供方、服务消费方紧耦合参数列表中的注解映射并不会继承,因此Controller中必须再次声明方法、参数列表、注解2.4.2.抽取方式将Feign的Client抽取为独立模块,并且把接口有关的POJO、默认的Feign配置都放到这个模块中,提供给所有消费者使用。例如,将UserClient、User、Feign的默认配置都抽取到一个feign-api包中,所有微服务引用该依赖包,即可直接使用。2.4.3.实现基于抽取的最佳实践1)抽取首先创建一个module,命名为feign-api:项目结构:在feign-api中然后引入feign的starter依赖<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-openfeign</artifactId> </dependency>然后,order-service中编写的UserClient、User、DefaultFeignConfiguration都复制到feign-api项目中2)在order-service中使用feign-api首先,删除order-service中的UserClient、User、DefaultFeignConfiguration等类或接口。在order-service的pom文件中中引入feign-api的依赖:<dependency> <groupId>cn.itcast.demo</groupId> <artifactId>feign-api</artifactId> <version>1.0</version> </dependency>修改order-service中的所有与上述三个组件有关的导包部分,改成导入feign-api中的包3)重启测试重启后,发现服务报错了:这是因为UserClient现在在cn.itcast.feign.clients包下,而order-service的@EnableFeignClients注解是在cn.itcast.order包下,不在同一个包,无法扫描到UserClient。4)解决扫描包问题方式一:指定Feign应该扫描的包:@EnableFeignClients(basePackages = "cn.itcast.feign.clients")方式二:指定需要加载的Client接口:@EnableFeignClients(clients = {UserClient.class})3.Gateway服务网关Spring Cloud Gateway 是 Spring Cloud 的一个全新项目,该项目是基于 Spring 5.0,Spring Boot 2.0 和 Project Reactor 等响应式编程和事件流技术开发的网关,它旨在为微服务架构提供一种简单有效的统一的 API 路由管理方式。3.1.为什么需要网关Gateway网关是我们服务的守门神,所有微服务的统一入口。网关的核心功能特性:请求路由权限控制限流架构图:权限控制:网关作为微服务入口,需要校验用户是是否有请求资格,如果没有则进行拦截。路由和负载均衡:一切请求都必须先经过gateway,但网关不处理业务,而是根据某种规则,把请求转发到某个微服务,这个过程叫做路由。当然路由的目标服务有多个时,还需要做负载均衡。限流:当请求流量过高时,在网关中按照下流的微服务能够接受的速度来放行请求,避免服务压力过大。在SpringCloud中网关的实现包括两种:gatewayzuulZuul是基于Servlet的实现,属于阻塞式编程。而SpringCloudGateway则是基于Spring5中提供的WebFlux,属于响应式编程的实现,具备更好的性能。3.2.gateway快速入门下面,我们就演示下网关的基本路由功能。基本步骤如下:创建SpringBoot工程gateway,引入网关依赖编写启动类编写基础配置和路由规则启动网关服务进行测试1)创建gateway服务,引入依赖创建服务:引入依赖:<!--网关--> <dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-gateway</artifactId> </dependency> <!--nacos服务发现依赖--> <dependency> <groupId>com.alibaba.cloud</groupId> <artifactId>spring-cloud-starter-alibaba-nacos-discovery</artifactId> </dependency>2)编写启动类package cn.itcast.gateway; import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication; @SpringBootApplication public class GatewayApplication { public static void main(String[] args) { SpringApplication.run(GatewayApplication.class, args); } }3)编写基础配置和路由规则创建application.yml文件,内容如下:server: port: 10010 # 网关端口 spring: application: name: gateway # 服务名称 cloud: nacos: server-addr: localhost:8848 # nacos地址 gateway: routes: # 网关路由配置 - id: user-service # 路由id,自定义,只要唯一即可 # uri: http://127.0.0.1:8081 # 路由的目标地址 http就是固定地址 uri: lb://userservice # 路由的目标地址 lb就是负载均衡,后面跟服务名称 predicates: # 路由断言,也就是判断请求是否符合路由规则的条件 - Path=/user/** # 这个是按照路径匹配,只要以/user/开头就符合要求我们将符合Path 规则的一切请求,都代理到 uri参数指定的地址。本例中,我们将 /user/**开头的请求,代理到lb://userservice,lb是负载均衡,根据服务名拉取服务列表,实现负载均衡。4)重启测试重启网关,访问http://localhost:10010/user/1时,符合/user/**规则,请求转发到uri:http://userservice/user/1,得到了结果:5)网关路由的流程图整个访问的流程如下:总结:网关搭建步骤:创建项目,引入nacos服务发现和gateway依赖配置application.yml,包括服务基本信息、nacos地址、路由路由配置包括:路由id:路由的唯一标示路由目标(uri):路由的目标地址,http代表固定地址,lb代表根据服务名负载均衡路由断言(predicates):判断路由的规则,路由过滤器(filters):对请求或响应做处理接下来,就重点来学习路由断言和路由过滤器的详细知识3.3.断言工厂我们在配置文件中写的断言规则只是字符串,这些字符串会被Predicate Factory读取并处理,转变为路由判断的条件例如Path=/user/**是按照路径匹配,这个规则是由org.springframework.cloud.gateway.handler.predicate.PathRoutePredicateFactory类来处理的,像这样的断言工厂在SpringCloudGateway还有十几个:名称说明示例After是某个时间点后的请求- After=2037-01-20T17:42:47.789-07:00[America/Denver]Before是某个时间点之前的请求- Before=2031-04-13T15:14:47.433+08:00[Asia/Shanghai]Between是某两个时间点之前的请求- Between=2037-01-20T17:42:47.789-07:00[America/Denver], 2037-01-21T17:42:47.789-07:00[America/Denver]Cookie请求必须包含某些cookie- Cookie=chocolate, ch.pHeader请求必须包含某些header- Header=X-Request-Id, \d+Host请求必须是访问某个host(域名)- Host=.somehost.org,.anotherhost.orgMethod请求方式必须是指定方式- Method=GET,POSTPath请求路径必须符合指定规则- Path=/red/{segment},/blue/**Query请求参数必须包含指定参数- Query=name, Jack或者- Query=nameRemoteAddr请求者的ip必须是指定范围- RemoteAddr=192.168.1.1/24Weight权重处理 我们只需要掌握Path这种路由工程就可以了。3.4.过滤器工厂GatewayFilter是网关中提供的一种过滤器,可以对进入网关的请求和微服务返回的响应做处理:3.4.1.路由过滤器的种类Spring提供了31种不同的路由过滤器工厂。例如:名称说明AddRequestHeader给当前请求添加一个请求头RemoveRequestHeader移除请求中的一个请求头AddResponseHeader给响应结果中添加一个响应头RemoveResponseHeader从响应结果中移除有一个响应头RequestRateLimiter限制请求的流量3.4.2.请求头过滤器下面我们以AddRequestHeader 为例来讲解。需求:给所有进入userservice的请求添加一个请求头:Truth=itcast is freaking awesome!只需要修改gateway服务的application.yml文件,添加路由过滤即可:spring: cloud: gateway: routes: - id: user-service uri: lb://userservice predicates: - Path=/user/** filters: # 过滤器 - AddRequestHeader=Truth, Itcast is freaking awesome! # 添加请求头当前过滤器写在userservice路由下,因此仅仅对访问userservice的请求有效。3.4.3.默认过滤器如果要对所有的路由都生效,则可以将过滤器工厂写到default下。格式如下:spring: cloud: gateway: routes: - id: user-service uri: lb://userservice predicates: - Path=/user/** default-filters: # 默认过滤项 - AddRequestHeader=Truth, Itcast is freaking awesome! 3.4.4.总结过滤器的作用是什么?① 对路由的请求或响应做加工处理,比如添加请求头② 配置在路由下的过滤器只对当前路由的请求生效defaultFilters的作用是什么?① 对所有路由都生效的过滤器3.5.全局过滤器上一节学习的过滤器,网关提供了31种,但每一种过滤器的作用都是固定的。如果我们希望拦截请求,做自己的业务逻辑则没办法实现。3.5.1.全局过滤器作用全局过滤器的作用也是处理一切进入网关的请求和微服务响应,与GatewayFilter的作用一样。区别在于GatewayFilter通过配置定义,处理逻辑是固定的;而GlobalFilter的逻辑需要自己写代码实现。定义方式是实现GlobalFilter接口。public interface GlobalFilter { /** * 处理当前请求,有必要的话通过{@link GatewayFilterChain}将请求交给下一个过滤器处理 * * @param exchange 请求上下文,里面可以获取Request、Response等信息 * @param chain 用来把请求委托给下一个过滤器 * @return {@code Mono<Void>} 返回标示当前过滤器业务结束 */ Mono<Void> filter(ServerWebExchange exchange, GatewayFilterChain chain); }在filter中编写自定义逻辑,可以实现下列功能:登录状态判断权限校验请求限流等3.5.2.自定义全局过滤器需求:定义全局过滤器,拦截请求,判断请求的参数是否满足下面条件:参数中是否有authorization,authorization参数值是否为admin如果同时满足则放行,否则拦截实现:在gateway中定义一个过滤器:package cn.itcast.gateway.filters; import org.springframework.cloud.gateway.filter.GatewayFilterChain; import org.springframework.cloud.gateway.filter.GlobalFilter; import org.springframework.core.annotation.Order; import org.springframework.http.HttpStatus; import org.springframework.stereotype.Component; import org.springframework.web.server.ServerWebExchange; import reactor.core.publisher.Mono; @Order(-1) @Component public class AuthorizeFilter implements GlobalFilter { @Override public Mono<Void> filter(ServerWebExchange exchange, GatewayFilterChain chain) { // 1.获取请求参数 MultiValueMap<String, String> params = exchange.getRequest().getQueryParams(); // 2.获取authorization参数 String auth = params.getFirst("authorization"); // 3.校验 if ("admin".equals(auth)) { // 放行 return chain.filter(exchange); } // 4.拦截 // 4.1.禁止访问,设置状态码 exchange.getResponse().setStatusCode(HttpStatus.FORBIDDEN); // 4.2.结束处理 return exchange.getResponse().setComplete(); } }3.5.3.过滤器执行顺序请求进入网关会碰到三类过滤器:当前路由的过滤器、DefaultFilter、GlobalFilter请求路由后,会将当前路由过滤器和DefaultFilter、GlobalFilter,合并到一个过滤器链(集合)中,排序后依次执行每个过滤器:排序的规则是什么呢?每一个过滤器都必须指定一个int类型的order值,order值越小,优先级越高,执行顺序越靠前。GlobalFilter通过实现Ordered接口,或者添加@Order注解来指定order值,由我们自己指定路由过滤器和defaultFilter的order由Spring指定,默认是按照声明顺序从1递增。当过滤器的order值一样时,会按照 defaultFilter > 路由过滤器 > GlobalFilter的顺序执行。详细内容,可以查看源码:org.springframework.cloud.gateway.route.RouteDefinitionRouteLocator#getFilters()方法是先加载defaultFilters,然后再加载某个route的filters,然后合并。org.springframework.cloud.gateway.handler.FilteringWebHandler#handle()方法会加载全局过滤器,与前面的过滤器合并后根据order排序,组织过滤器链3.6.跨域问题3.6.1.什么是跨域问题跨域:域名不一致就是跨域,主要包括:域名不同: www.taobao.com 和 www.taobao.org 和 www.jd.com 和 miaosha.jd.com域名相同,端口不同:localhost:8080和localhost8081跨域问题:浏览器禁止请求的发起者与服务端发生跨域ajax请求,请求被浏览器拦截的问题解决方案:CORS,这个以前应该学习过,这里不再赘述了。不知道的小伙伴可以查看https://www.ruanyifeng.com/blog/2016/04/cors.html3.6.2.模拟跨域问题找到课前资料的页面文件:放入tomcat或者nginx这样的web服务器中,启动并访问。可以在浏览器控制台看到下面的错误:从localhost:8090访问localhost:10010,端口不同,显然是跨域的请求。3.6.3.解决跨域问题在gateway服务的application.yml文件中,添加下面的配置:spring: cloud: gateway: # 。。。 globalcors: # 全局的跨域处理 add-to-simple-url-handler-mapping: true # 解决options请求被拦截问题 corsConfigurations: '[/**]': allowedOrigins: # 允许哪些网站的跨域请求 - "http://localhost:8090" allowedMethods: # 允许的跨域ajax的请求方式 - "GET" - "POST" - "DELETE" - "PUT" - "OPTIONS" allowedHeaders: "*" # 允许在请求中携带的头信息 allowCredentials: true # 是否允许携带cookie maxAge: 360000 # 这次跨域检测的有效期

Nacos配置管理、Feign远程调用、Gateway网关 SpringCloud实用篇020.学习目标1.Nacos配置管理Nacos除了可以做注册中心,同样可以做配置管理来使用。1.1.统一配置管理当微服务部署的实例越来越多,达到数十、数百时,逐个修改微服务配置就会让人抓狂,而且很容易出错。我们需要一种统一配置管理方案,可以集中管理所有实例的配置。Nacos一方面可以将配置集中管理,另一方可以在配置变更时,及时通知微服务,实现配置的热更新。1.1.1.在nacos中添加配置文件如何在nacos中管理配置呢?然后在弹出的表单中,填写配置信息:注意:项目的核心配置,需要热更新的配置才有放到nacos管理的必要。基本不会变更的一些配置还是保存在微服务本地比较好。1.1.2.从微服务拉取配置微服务要拉取nacos中管理的配置,并且与本地的application.yml配置合并,才能完成项目启动。但如果尚未读取application.yml,又如何得知nacos地址呢?因此spring引入了一种新的配置文件:bootstrap.yaml文件,会在application.yml之前被读取,流程如下:1)引入nacos-config依赖首先,在user-service服务中,引入nacos-config的客户端依赖:<!--nacos配置管理依赖--> <dependency> <groupId>com.alibaba.cloud</groupId> <artifactId>spring-cloud-starter-alibaba-nacos-config</artifactId> </dependency>2)添加bootstrap.yaml然后,在user-service中添加一个bootstrap.yaml文件,内容如下:spring: application: name: userservice # 服务名称 profiles: active: dev #开发环境,这里是dev cloud: nacos: server-addr: localhost:8848 # Nacos地址 config: file-extension: yaml # 文件后缀名这里会根据spring.cloud.nacos.server-addr获取nacos地址,再根据${spring.application.name}-${spring.profiles.active}.${spring.cloud.nacos.config.file-extension}作为文件id,来读取配置。本例中,就是去读取userservice-dev.yaml:3)读取nacos配置在user-service中的UserController中添加业务逻辑,读取pattern.dateformat配置:完整代码:package cn.itcast.user.web; import cn.itcast.user.pojo.User; import cn.itcast.user.service.UserService; import lombok.extern.slf4j.Slf4j; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.beans.factory.annotation.Value; import org.springframework.web.bind.annotation.*; import java.time.LocalDateTime; import java.time.format.DateTimeFormatter; @Slf4j @RestController @RequestMapping("/user") public class UserController { @Autowired private UserService userService; @Value("${pattern.dateformat}") private String dateformat; @GetMapping("now") public String now(){ return LocalDateTime.now().format(DateTimeFormatter.ofPattern(dateformat)); } // ...略 }在页面访问,可以看到效果:1.2.配置热更新我们最终的目的,是修改nacos中的配置后,微服务中无需重启即可让配置生效,也就是配置热更新。要实现配置热更新,可以使用两种方式:1.2.1.方式一在@Value注入的变量所在类上添加注解@RefreshScope:1.2.2.方式二使用@ConfigurationProperties注解代替@Value注解。在user-service服务中,添加一个类,读取patterrn.dateformat属性:package cn.itcast.user.config; import lombok.Data; import org.springframework.boot.context.properties.ConfigurationProperties; import org.springframework.stereotype.Component; @Component @Data @ConfigurationProperties(prefix = "pattern") public class PatternProperties { private String dateformat; }在UserController中使用这个类代替@Value:完整代码:package cn.itcast.user.web; import cn.itcast.user.config.PatternProperties; import cn.itcast.user.pojo.User; import cn.itcast.user.service.UserService; import lombok.extern.slf4j.Slf4j; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.PathVariable; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RestController; import java.time.LocalDateTime; import java.time.format.DateTimeFormatter; @Slf4j @RestController @RequestMapping("/user") public class UserController { @Autowired private UserService userService; @Autowired private PatternProperties patternProperties; @GetMapping("now") public String now(){ return LocalDateTime.now().format(DateTimeFormatter.ofPattern(patternProperties.getDateformat())); } // 略 }1.3.配置共享其实微服务启动时,会去nacos读取多个配置文件,例如:[spring.application.name]-[spring.profiles.active].yaml,例如:userservice-dev.yaml[spring.application.name].yaml,例如:userservice.yaml而[spring.application.name].yaml不包含环境,因此可以被多个环境共享。下面我们通过案例来测试配置共享1)添加一个环境共享配置我们在nacos中添加一个userservice.yaml文件:2)在user-service中读取共享配置在user-service服务中,修改PatternProperties类,读取新添加的属性:在user-service服务中,修改UserController,添加一个方法:3)运行两个UserApplication,使用不同的profile修改UserApplication2这个启动项,改变其profile值:这样,UserApplication(8081)使用的profile是dev,UserApplication2(8082)使用的profile是test。启动UserApplication和UserApplication2访问http://localhost:8081/user/prop,结果:访问http://localhost:8082/user/prop,结果:可以看出来,不管是dev,还是test环境,都读取到了envSharedValue这个属性的值。4)配置共享的优先级当nacos、服务本地同时出现相同属性时,优先级有高低之分:1.4.搭建Nacos集群Nacos生产环境下一定要部署为集群状态,部署方式参考课前资料中的文档:2.Feign远程调用先来看我们以前利用RestTemplate发起远程调用的代码:存在下面的问题:•代码可读性差,编程体验不统一•参数复杂URL难以维护Feign是一个声明式的http客户端,官方地址:https://github.com/OpenFeign/feign其作用就是帮助我们优雅的实现http请求的发送,解决上面提到的问题。2.1.Feign替代RestTemplateFegin的使用步骤如下:1)引入依赖我们在order-service服务的pom文件中引入feign的依赖:<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-openfeign</artifactId> </dependency>2)添加注解在order-service的启动类添加注解开启Feign的功能:3)编写Feign的客户端在order-service中新建一个接口,内容如下:package cn.itcast.order.client; import cn.itcast.order.pojo.User; import org.springframework.cloud.openfeign.FeignClient; import org.springframework.web.bind.annotation.GetMapping; import org.springframework.web.bind.annotation.PathVariable; @FeignClient("userservice") public interface UserClient { @GetMapping("/user/{id}") User findById(@PathVariable("id") Long id); }这个客户端主要是基于SpringMVC的注解来声明远程调用的信息,比如:服务名称:userservice请求方式:GET请求路径:/user/{id}请求参数:Long id返回值类型:User这样,Feign就可以帮助我们发送http请求,无需自己使用RestTemplate来发送了。4)测试修改order-service中的OrderService类中的queryOrderById方法,使用Feign客户端代替RestTemplate:是不是看起来优雅多了。5)总结使用Feign的步骤:① 引入依赖② 添加@EnableFeignClients注解③ 编写FeignClient接口④ 使用FeignClient中定义的方法代替RestTemplate2.2.自定义配置Feign可以支持很多的自定义配置,如下表所示:类型作用说明feign.Logger.Level修改日志级别包含四种不同的级别:NONE、BASIC、HEADERS、FULLfeign.codec.Decoder响应结果的解析器http远程调用的结果做解析,例如解析json字符串为java对象feign.codec.Encoder请求参数编码将请求参数编码,便于通过http请求发送feign. Contract支持的注解格式默认是SpringMVC的注解feign. Retryer失败重试机制请求失败的重试机制,默认是没有,不过会使用Ribbon的重试一般情况下,默认值就能满足我们使用,如果要自定义时,只需要创建自定义的@Bean覆盖默认Bean即可。下面以日志为例来演示如何自定义配置。2.2.1.配置文件方式基于配置文件修改feign的日志级别可以针对单个服务:feign: client: config: userservice: # 针对某个微服务的配置 loggerLevel: FULL # 日志级别 也可以针对所有服务:feign: client: config: default: # 这里用default就是全局配置,如果是写服务名称,则是针对某个微服务的配置 loggerLevel: FULL # 日志级别 而日志的级别分为四种:NONE:不记录任何日志信息,这是默认值。BASIC:仅记录请求的方法,URL以及响应状态码和执行时间HEADERS:在BASIC的基础上,额外记录了请求和响应的头信息FULL:记录所有请求和响应的明细,包括头信息、请求体、元数据。2.2.2.Java代码方式也可以基于Java代码来修改日志级别,先声明一个类,然后声明一个Logger.Level的对象:public class DefaultFeignConfiguration { @Bean public Logger.Level feignLogLevel(){ return Logger.Level.BASIC; // 日志级别为BASIC } }如果要全局生效,将其放到启动类的@EnableFeignClients这个注解中:@EnableFeignClients(defaultConfiguration = DefaultFeignConfiguration .class) 如果是局部生效,则把它放到对应的@FeignClient这个注解中:@FeignClient(value = "userservice", configuration = DefaultFeignConfiguration .class) 2.3.Feign使用优化Feign底层发起http请求,依赖于其它的框架。其底层客户端实现包括:•URLConnection:默认实现,不支持连接池•Apache HttpClient :支持连接池•OKHttp:支持连接池因此提高Feign的性能主要手段就是使用连接池代替默认的URLConnection。这里我们用Apache的HttpClient来演示。1)引入依赖在order-service的pom文件中引入Apache的HttpClient依赖:<!--httpClient的依赖 --> <dependency> <groupId>io.github.openfeign</groupId> <artifactId>feign-httpclient</artifactId> </dependency>2)配置连接池在order-service的application.yml中添加配置:feign: client: config: default: # default全局的配置 loggerLevel: BASIC # 日志级别,BASIC就是基本的请求和响应信息 httpclient: enabled: true # 开启feign对HttpClient的支持 max-connections: 200 # 最大的连接数 max-connections-per-route: 50 # 每个路径的最大连接数接下来,在FeignClientFactoryBean中的loadBalance方法中打断点:Debug方式启动order-service服务,可以看到这里的client,底层就是Apache HttpClient:总结,Feign的优化:1.日志级别尽量用basic2.使用HttpClient或OKHttp代替URLConnection① 引入feign-httpClient依赖② 配置文件开启httpClient功能,设置连接池参数2.4.最佳实践所谓最近实践,就是使用过程中总结的经验,最好的一种使用方式。自习观察可以发现,Feign的客户端与服务提供者的controller代码非常相似:feign客户端:UserController:有没有一种办法简化这种重复的代码编写呢?2.4.1.继承方式一样的代码可以通过继承来共享:1)定义一个API接口,利用定义方法,并基于SpringMVC注解做声明。2)Feign客户端和Controller都集成改接口优点:简单实现了代码共享缺点:服务提供方、服务消费方紧耦合参数列表中的注解映射并不会继承,因此Controller中必须再次声明方法、参数列表、注解2.4.2.抽取方式将Feign的Client抽取为独立模块,并且把接口有关的POJO、默认的Feign配置都放到这个模块中,提供给所有消费者使用。例如,将UserClient、User、Feign的默认配置都抽取到一个feign-api包中,所有微服务引用该依赖包,即可直接使用。2.4.3.实现基于抽取的最佳实践1)抽取首先创建一个module,命名为feign-api:项目结构:在feign-api中然后引入feign的starter依赖<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-openfeign</artifactId> </dependency>然后,order-service中编写的UserClient、User、DefaultFeignConfiguration都复制到feign-api项目中2)在order-service中使用feign-api首先,删除order-service中的UserClient、User、DefaultFeignConfiguration等类或接口。在order-service的pom文件中中引入feign-api的依赖:<dependency> <groupId>cn.itcast.demo</groupId> <artifactId>feign-api</artifactId> <version>1.0</version> </dependency>修改order-service中的所有与上述三个组件有关的导包部分,改成导入feign-api中的包3)重启测试重启后,发现服务报错了:这是因为UserClient现在在cn.itcast.feign.clients包下,而order-service的@EnableFeignClients注解是在cn.itcast.order包下,不在同一个包,无法扫描到UserClient。4)解决扫描包问题方式一:指定Feign应该扫描的包:@EnableFeignClients(basePackages = "cn.itcast.feign.clients")方式二:指定需要加载的Client接口:@EnableFeignClients(clients = {UserClient.class})3.Gateway服务网关Spring Cloud Gateway 是 Spring Cloud 的一个全新项目,该项目是基于 Spring 5.0,Spring Boot 2.0 和 Project Reactor 等响应式编程和事件流技术开发的网关,它旨在为微服务架构提供一种简单有效的统一的 API 路由管理方式。3.1.为什么需要网关Gateway网关是我们服务的守门神,所有微服务的统一入口。网关的核心功能特性:请求路由权限控制限流架构图:权限控制:网关作为微服务入口,需要校验用户是是否有请求资格,如果没有则进行拦截。路由和负载均衡:一切请求都必须先经过gateway,但网关不处理业务,而是根据某种规则,把请求转发到某个微服务,这个过程叫做路由。当然路由的目标服务有多个时,还需要做负载均衡。限流:当请求流量过高时,在网关中按照下流的微服务能够接受的速度来放行请求,避免服务压力过大。在SpringCloud中网关的实现包括两种:gatewayzuulZuul是基于Servlet的实现,属于阻塞式编程。而SpringCloudGateway则是基于Spring5中提供的WebFlux,属于响应式编程的实现,具备更好的性能。3.2.gateway快速入门下面,我们就演示下网关的基本路由功能。基本步骤如下:创建SpringBoot工程gateway,引入网关依赖编写启动类编写基础配置和路由规则启动网关服务进行测试1)创建gateway服务,引入依赖创建服务:引入依赖:<!--网关--> <dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-gateway</artifactId> </dependency> <!--nacos服务发现依赖--> <dependency> <groupId>com.alibaba.cloud</groupId> <artifactId>spring-cloud-starter-alibaba-nacos-discovery</artifactId> </dependency>2)编写启动类package cn.itcast.gateway; import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication; @SpringBootApplication public class GatewayApplication { public static void main(String[] args) { SpringApplication.run(GatewayApplication.class, args); } }3)编写基础配置和路由规则创建application.yml文件,内容如下:server: port: 10010 # 网关端口 spring: application: name: gateway # 服务名称 cloud: nacos: server-addr: localhost:8848 # nacos地址 gateway: routes: # 网关路由配置 - id: user-service # 路由id,自定义,只要唯一即可 # uri: http://127.0.0.1:8081 # 路由的目标地址 http就是固定地址 uri: lb://userservice # 路由的目标地址 lb就是负载均衡,后面跟服务名称 predicates: # 路由断言,也就是判断请求是否符合路由规则的条件 - Path=/user/** # 这个是按照路径匹配,只要以/user/开头就符合要求我们将符合Path 规则的一切请求,都代理到 uri参数指定的地址。本例中,我们将 /user/**开头的请求,代理到lb://userservice,lb是负载均衡,根据服务名拉取服务列表,实现负载均衡。4)重启测试重启网关,访问http://localhost:10010/user/1时,符合/user/**规则,请求转发到uri:http://userservice/user/1,得到了结果:5)网关路由的流程图整个访问的流程如下:总结:网关搭建步骤:创建项目,引入nacos服务发现和gateway依赖配置application.yml,包括服务基本信息、nacos地址、路由路由配置包括:路由id:路由的唯一标示路由目标(uri):路由的目标地址,http代表固定地址,lb代表根据服务名负载均衡路由断言(predicates):判断路由的规则,路由过滤器(filters):对请求或响应做处理接下来,就重点来学习路由断言和路由过滤器的详细知识3.3.断言工厂我们在配置文件中写的断言规则只是字符串,这些字符串会被Predicate Factory读取并处理,转变为路由判断的条件例如Path=/user/**是按照路径匹配,这个规则是由org.springframework.cloud.gateway.handler.predicate.PathRoutePredicateFactory类来处理的,像这样的断言工厂在SpringCloudGateway还有十几个:名称说明示例After是某个时间点后的请求- After=2037-01-20T17:42:47.789-07:00[America/Denver]Before是某个时间点之前的请求- Before=2031-04-13T15:14:47.433+08:00[Asia/Shanghai]Between是某两个时间点之前的请求- Between=2037-01-20T17:42:47.789-07:00[America/Denver], 2037-01-21T17:42:47.789-07:00[America/Denver]Cookie请求必须包含某些cookie- Cookie=chocolate, ch.pHeader请求必须包含某些header- Header=X-Request-Id, \d+Host请求必须是访问某个host(域名)- Host=.somehost.org,.anotherhost.orgMethod请求方式必须是指定方式- Method=GET,POSTPath请求路径必须符合指定规则- Path=/red/{segment},/blue/**Query请求参数必须包含指定参数- Query=name, Jack或者- Query=nameRemoteAddr请求者的ip必须是指定范围- RemoteAddr=192.168.1.1/24Weight权重处理 我们只需要掌握Path这种路由工程就可以了。3.4.过滤器工厂GatewayFilter是网关中提供的一种过滤器,可以对进入网关的请求和微服务返回的响应做处理:3.4.1.路由过滤器的种类Spring提供了31种不同的路由过滤器工厂。例如:名称说明AddRequestHeader给当前请求添加一个请求头RemoveRequestHeader移除请求中的一个请求头AddResponseHeader给响应结果中添加一个响应头RemoveResponseHeader从响应结果中移除有一个响应头RequestRateLimiter限制请求的流量3.4.2.请求头过滤器下面我们以AddRequestHeader 为例来讲解。需求:给所有进入userservice的请求添加一个请求头:Truth=itcast is freaking awesome!只需要修改gateway服务的application.yml文件,添加路由过滤即可:spring: cloud: gateway: routes: - id: user-service uri: lb://userservice predicates: - Path=/user/** filters: # 过滤器 - AddRequestHeader=Truth, Itcast is freaking awesome! # 添加请求头当前过滤器写在userservice路由下,因此仅仅对访问userservice的请求有效。3.4.3.默认过滤器如果要对所有的路由都生效,则可以将过滤器工厂写到default下。格式如下:spring: cloud: gateway: routes: - id: user-service uri: lb://userservice predicates: - Path=/user/** default-filters: # 默认过滤项 - AddRequestHeader=Truth, Itcast is freaking awesome! 3.4.4.总结过滤器的作用是什么?① 对路由的请求或响应做加工处理,比如添加请求头② 配置在路由下的过滤器只对当前路由的请求生效defaultFilters的作用是什么?① 对所有路由都生效的过滤器3.5.全局过滤器上一节学习的过滤器,网关提供了31种,但每一种过滤器的作用都是固定的。如果我们希望拦截请求,做自己的业务逻辑则没办法实现。3.5.1.全局过滤器作用全局过滤器的作用也是处理一切进入网关的请求和微服务响应,与GatewayFilter的作用一样。区别在于GatewayFilter通过配置定义,处理逻辑是固定的;而GlobalFilter的逻辑需要自己写代码实现。定义方式是实现GlobalFilter接口。public interface GlobalFilter { /** * 处理当前请求,有必要的话通过{@link GatewayFilterChain}将请求交给下一个过滤器处理 * * @param exchange 请求上下文,里面可以获取Request、Response等信息 * @param chain 用来把请求委托给下一个过滤器 * @return {@code Mono<Void>} 返回标示当前过滤器业务结束 */ Mono<Void> filter(ServerWebExchange exchange, GatewayFilterChain chain); }在filter中编写自定义逻辑,可以实现下列功能:登录状态判断权限校验请求限流等3.5.2.自定义全局过滤器需求:定义全局过滤器,拦截请求,判断请求的参数是否满足下面条件:参数中是否有authorization,authorization参数值是否为admin如果同时满足则放行,否则拦截实现:在gateway中定义一个过滤器:package cn.itcast.gateway.filters; import org.springframework.cloud.gateway.filter.GatewayFilterChain; import org.springframework.cloud.gateway.filter.GlobalFilter; import org.springframework.core.annotation.Order; import org.springframework.http.HttpStatus; import org.springframework.stereotype.Component; import org.springframework.web.server.ServerWebExchange; import reactor.core.publisher.Mono; @Order(-1) @Component public class AuthorizeFilter implements GlobalFilter { @Override public Mono<Void> filter(ServerWebExchange exchange, GatewayFilterChain chain) { // 1.获取请求参数 MultiValueMap<String, String> params = exchange.getRequest().getQueryParams(); // 2.获取authorization参数 String auth = params.getFirst("authorization"); // 3.校验 if ("admin".equals(auth)) { // 放行 return chain.filter(exchange); } // 4.拦截 // 4.1.禁止访问,设置状态码 exchange.getResponse().setStatusCode(HttpStatus.FORBIDDEN); // 4.2.结束处理 return exchange.getResponse().setComplete(); } }3.5.3.过滤器执行顺序请求进入网关会碰到三类过滤器:当前路由的过滤器、DefaultFilter、GlobalFilter请求路由后,会将当前路由过滤器和DefaultFilter、GlobalFilter,合并到一个过滤器链(集合)中,排序后依次执行每个过滤器:排序的规则是什么呢?每一个过滤器都必须指定一个int类型的order值,order值越小,优先级越高,执行顺序越靠前。GlobalFilter通过实现Ordered接口,或者添加@Order注解来指定order值,由我们自己指定路由过滤器和defaultFilter的order由Spring指定,默认是按照声明顺序从1递增。当过滤器的order值一样时,会按照 defaultFilter > 路由过滤器 > GlobalFilter的顺序执行。详细内容,可以查看源码:org.springframework.cloud.gateway.route.RouteDefinitionRouteLocator#getFilters()方法是先加载defaultFilters,然后再加载某个route的filters,然后合并。org.springframework.cloud.gateway.handler.FilteringWebHandler#handle()方法会加载全局过滤器,与前面的过滤器合并后根据order排序,组织过滤器链3.6.跨域问题3.6.1.什么是跨域问题跨域:域名不一致就是跨域,主要包括:域名不同: www.taobao.com 和 www.taobao.org 和 www.jd.com 和 miaosha.jd.com域名相同,端口不同:localhost:8080和localhost8081跨域问题:浏览器禁止请求的发起者与服务端发生跨域ajax请求,请求被浏览器拦截的问题解决方案:CORS,这个以前应该学习过,这里不再赘述了。不知道的小伙伴可以查看https://www.ruanyifeng.com/blog/2016/04/cors.html3.6.2.模拟跨域问题找到课前资料的页面文件:放入tomcat或者nginx这样的web服务器中,启动并访问。可以在浏览器控制台看到下面的错误:从localhost:8090访问localhost:10010,端口不同,显然是跨域的请求。3.6.3.解决跨域问题在gateway服务的application.yml文件中,添加下面的配置:spring: cloud: gateway: # 。。。 globalcors: # 全局的跨域处理 add-to-simple-url-handler-mapping: true # 解决options请求被拦截问题 corsConfigurations: '[/**]': allowedOrigins: # 允许哪些网站的跨域请求 - "http://localhost:8090" allowedMethods: # 允许的跨域ajax的请求方式 - "GET" - "POST" - "DELETE" - "PUT" - "OPTIONS" allowedHeaders: "*" # 允许在请求中携带的头信息 allowCredentials: true # 是否允许携带cookie maxAge: 360000 # 这次跨域检测的有效期 -

Nacos集群搭建 Nacos集群搭建1.集群结构图官方给出的Nacos集群图:其中包含3个nacos节点,然后一个负载均衡器代理3个Nacos。这里负载均衡器可以使用nginx。我们计划的集群结构:三个nacos节点的地址:节点ipportnacos1192.168.150.18845nacos2192.168.150.18846nacos3192.168.150.188472.搭建集群搭建集群的基本步骤:搭建数据库,初始化数据库表结构下载nacos安装包配置nacos启动nacos集群nginx反向代理2.1.初始化数据库Nacos默认数据存储在内嵌数据库Derby中,不属于生产可用的数据库。官方推荐的最佳实践是使用带有主从的高可用数据库集群,主从模式的高可用数据库可以参考传智教育的后续高手课程。这里我们以单点的数据库为例来讲解。首先新建一个数据库,命名为nacos,而后导入下面的SQL:CREATE TABLE `config_info` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(255) DEFAULT NULL, `content` longtext NOT NULL COMMENT 'content', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', `app_name` varchar(128) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', `c_desc` varchar(256) DEFAULT NULL, `c_use` varchar(64) DEFAULT NULL, `effect` varchar(64) DEFAULT NULL, `type` varchar(64) DEFAULT NULL, `c_schema` text, PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfo_datagrouptenant` (`data_id`,`group_id`,`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_aggr */ /******************************************/ CREATE TABLE `config_info_aggr` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(255) NOT NULL COMMENT 'group_id', `datum_id` varchar(255) NOT NULL COMMENT 'datum_id', `content` longtext NOT NULL COMMENT '内容', `gmt_modified` datetime NOT NULL COMMENT '修改时间', `app_name` varchar(128) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfoaggr_datagrouptenantdatum` (`data_id`,`group_id`,`tenant_id`,`datum_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='增加租户字段'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_beta */ /******************************************/ CREATE TABLE `config_info_beta` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL COMMENT 'content', `beta_ips` varchar(1024) DEFAULT NULL COMMENT 'betaIps', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfobeta_datagrouptenant` (`data_id`,`group_id`,`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info_beta'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_tag */ /******************************************/ CREATE TABLE `config_info_tag` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `tenant_id` varchar(128) DEFAULT '' COMMENT 'tenant_id', `tag_id` varchar(128) NOT NULL COMMENT 'tag_id', `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL COMMENT 'content', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfotag_datagrouptenanttag` (`data_id`,`group_id`,`tenant_id`,`tag_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info_tag'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_tags_relation */ /******************************************/ CREATE TABLE `config_tags_relation` ( `id` bigint(20) NOT NULL COMMENT 'id', `tag_name` varchar(128) NOT NULL COMMENT 'tag_name', `tag_type` varchar(64) DEFAULT NULL COMMENT 'tag_type', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `tenant_id` varchar(128) DEFAULT '' COMMENT 'tenant_id', `nid` bigint(20) NOT NULL AUTO_INCREMENT, PRIMARY KEY (`nid`), UNIQUE KEY `uk_configtagrelation_configidtag` (`id`,`tag_name`,`tag_type`), KEY `idx_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_tag_relation'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = group_capacity */ /******************************************/ CREATE TABLE `group_capacity` ( `id` bigint(20) unsigned NOT NULL AUTO_INCREMENT COMMENT '主键ID', `group_id` varchar(128) NOT NULL DEFAULT '' COMMENT 'Group ID,空字符表示整个集群', `quota` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '配额,0表示使用默认值', `usage` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '使用量', `max_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个配置大小上限,单位为字节,0表示使用默认值', `max_aggr_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '聚合子配置最大个数,,0表示使用默认值', `max_aggr_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个聚合数据的子配置大小上限,单位为字节,0表示使用默认值', `max_history_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '最大变更历史数量', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_group_id` (`group_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='集群、各Group容量信息表'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = his_config_info */ /******************************************/ CREATE TABLE `his_config_info` ( `id` bigint(64) unsigned NOT NULL, `nid` bigint(20) unsigned NOT NULL AUTO_INCREMENT, `data_id` varchar(255) NOT NULL, `group_id` varchar(128) NOT NULL, `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL, `md5` varchar(32) DEFAULT NULL, `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP, `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP, `src_user` text, `src_ip` varchar(50) DEFAULT NULL, `op_type` char(10) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`nid`), KEY `idx_gmt_create` (`gmt_create`), KEY `idx_gmt_modified` (`gmt_modified`), KEY `idx_did` (`data_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='多租户改造'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = tenant_capacity */ /******************************************/ CREATE TABLE `tenant_capacity` ( `id` bigint(20) unsigned NOT NULL AUTO_INCREMENT COMMENT '主键ID', `tenant_id` varchar(128) NOT NULL DEFAULT '' COMMENT 'Tenant ID', `quota` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '配额,0表示使用默认值', `usage` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '使用量', `max_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个配置大小上限,单位为字节,0表示使用默认值', `max_aggr_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '聚合子配置最大个数', `max_aggr_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个聚合数据的子配置大小上限,单位为字节,0表示使用默认值', `max_history_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '最大变更历史数量', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='租户容量信息表'; CREATE TABLE `tenant_info` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `kp` varchar(128) NOT NULL COMMENT 'kp', `tenant_id` varchar(128) default '' COMMENT 'tenant_id', `tenant_name` varchar(128) default '' COMMENT 'tenant_name', `tenant_desc` varchar(256) DEFAULT NULL COMMENT 'tenant_desc', `create_source` varchar(32) DEFAULT NULL COMMENT 'create_source', `gmt_create` bigint(20) NOT NULL COMMENT '创建时间', `gmt_modified` bigint(20) NOT NULL COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_tenant_info_kptenantid` (`kp`,`tenant_id`), KEY `idx_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='tenant_info'; CREATE TABLE `users` ( `username` varchar(50) NOT NULL PRIMARY KEY, `password` varchar(500) NOT NULL, `enabled` boolean NOT NULL ); CREATE TABLE `roles` ( `username` varchar(50) NOT NULL, `role` varchar(50) NOT NULL, UNIQUE INDEX `idx_user_role` (`username` ASC, `role` ASC) USING BTREE ); CREATE TABLE `permissions` ( `role` varchar(50) NOT NULL, `resource` varchar(255) NOT NULL, `action` varchar(8) NOT NULL, UNIQUE INDEX `uk_role_permission` (`role`,`resource`,`action`) USING BTREE ); INSERT INTO users (username, password, enabled) VALUES ('nacos', '$2a$10$EuWPZHzz32dJN7jexM34MOeYirDdFAZm2kuWj7VEOJhhZkDrxfvUu', TRUE); INSERT INTO roles (username, role) VALUES ('nacos', 'ROLE_ADMIN');2.2.下载nacosnacos在GitHub上有下载地址:https://github.com/alibaba/nacos/tags,可以选择任意版本下载。本例中才用1.4.1版本:2.3.配置Nacos将这个包解压到任意非中文目录下,如图:目录说明:bin:启动脚本conf:配置文件进入nacos的conf目录,修改配置文件cluster.conf.example,重命名为cluster.conf:然后添加内容:127.0.0.1:8845 127.0.0.1.8846 127.0.0.1.8847然后修改application.properties文件,添加数据库配置spring.datasource.platform=mysql db.num=1 db.url.0=jdbc:mysql://127.0.0.1:3306/nacos?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC db.user.0=root db.password.0=1232.4.启动将nacos文件夹复制三份,分别命名为:nacos1、nacos2、nacos3 然后分别修改三个文件夹中的application.properties,nacos1:server.port=8845nacos2:server.port=8846nacos3:server.port=8847然后分别启动三个nacos节点:startup.cmd2.5.nginx反向代理找到课前资料提供的nginx安装包: 解压到任意非中文目录下: 修改conf/nginx.conf文件,配置如下:upstream nacos-cluster { server 127.0.0.1:8845; server 127.0.0.1:8846; server 127.0.0.1:8847; } server { listen 80; server_name localhost; location /nacos { proxy_pass http://nacos-cluster; } }而后在浏览器访问:http://localhost/nacos即可。代码中application.yml文件配置如下:spring: cloud: nacos: server-addr: localhost:80 # Nacos地址2.6.优化实际部署时,需要给做反向代理的nginx服务器设置一个域名,这样后续如果有服务器迁移nacos的客户端也无需更改配置.Nacos的各个节点应该部署到多个不同服务器,做好容灾和隔离

Nacos集群搭建 Nacos集群搭建1.集群结构图官方给出的Nacos集群图:其中包含3个nacos节点,然后一个负载均衡器代理3个Nacos。这里负载均衡器可以使用nginx。我们计划的集群结构:三个nacos节点的地址:节点ipportnacos1192.168.150.18845nacos2192.168.150.18846nacos3192.168.150.188472.搭建集群搭建集群的基本步骤:搭建数据库,初始化数据库表结构下载nacos安装包配置nacos启动nacos集群nginx反向代理2.1.初始化数据库Nacos默认数据存储在内嵌数据库Derby中,不属于生产可用的数据库。官方推荐的最佳实践是使用带有主从的高可用数据库集群,主从模式的高可用数据库可以参考传智教育的后续高手课程。这里我们以单点的数据库为例来讲解。首先新建一个数据库,命名为nacos,而后导入下面的SQL:CREATE TABLE `config_info` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(255) DEFAULT NULL, `content` longtext NOT NULL COMMENT 'content', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', `app_name` varchar(128) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', `c_desc` varchar(256) DEFAULT NULL, `c_use` varchar(64) DEFAULT NULL, `effect` varchar(64) DEFAULT NULL, `type` varchar(64) DEFAULT NULL, `c_schema` text, PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfo_datagrouptenant` (`data_id`,`group_id`,`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_aggr */ /******************************************/ CREATE TABLE `config_info_aggr` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(255) NOT NULL COMMENT 'group_id', `datum_id` varchar(255) NOT NULL COMMENT 'datum_id', `content` longtext NOT NULL COMMENT '内容', `gmt_modified` datetime NOT NULL COMMENT '修改时间', `app_name` varchar(128) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfoaggr_datagrouptenantdatum` (`data_id`,`group_id`,`tenant_id`,`datum_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='增加租户字段'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_beta */ /******************************************/ CREATE TABLE `config_info_beta` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL COMMENT 'content', `beta_ips` varchar(1024) DEFAULT NULL COMMENT 'betaIps', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfobeta_datagrouptenant` (`data_id`,`group_id`,`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info_beta'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_info_tag */ /******************************************/ CREATE TABLE `config_info_tag` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `tenant_id` varchar(128) DEFAULT '' COMMENT 'tenant_id', `tag_id` varchar(128) NOT NULL COMMENT 'tag_id', `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL COMMENT 'content', `md5` varchar(32) DEFAULT NULL COMMENT 'md5', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', `src_user` text COMMENT 'source user', `src_ip` varchar(50) DEFAULT NULL COMMENT 'source ip', PRIMARY KEY (`id`), UNIQUE KEY `uk_configinfotag_datagrouptenanttag` (`data_id`,`group_id`,`tenant_id`,`tag_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_info_tag'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = config_tags_relation */ /******************************************/ CREATE TABLE `config_tags_relation` ( `id` bigint(20) NOT NULL COMMENT 'id', `tag_name` varchar(128) NOT NULL COMMENT 'tag_name', `tag_type` varchar(64) DEFAULT NULL COMMENT 'tag_type', `data_id` varchar(255) NOT NULL COMMENT 'data_id', `group_id` varchar(128) NOT NULL COMMENT 'group_id', `tenant_id` varchar(128) DEFAULT '' COMMENT 'tenant_id', `nid` bigint(20) NOT NULL AUTO_INCREMENT, PRIMARY KEY (`nid`), UNIQUE KEY `uk_configtagrelation_configidtag` (`id`,`tag_name`,`tag_type`), KEY `idx_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='config_tag_relation'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = group_capacity */ /******************************************/ CREATE TABLE `group_capacity` ( `id` bigint(20) unsigned NOT NULL AUTO_INCREMENT COMMENT '主键ID', `group_id` varchar(128) NOT NULL DEFAULT '' COMMENT 'Group ID,空字符表示整个集群', `quota` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '配额,0表示使用默认值', `usage` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '使用量', `max_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个配置大小上限,单位为字节,0表示使用默认值', `max_aggr_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '聚合子配置最大个数,,0表示使用默认值', `max_aggr_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个聚合数据的子配置大小上限,单位为字节,0表示使用默认值', `max_history_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '最大变更历史数量', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_group_id` (`group_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='集群、各Group容量信息表'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = his_config_info */ /******************************************/ CREATE TABLE `his_config_info` ( `id` bigint(64) unsigned NOT NULL, `nid` bigint(20) unsigned NOT NULL AUTO_INCREMENT, `data_id` varchar(255) NOT NULL, `group_id` varchar(128) NOT NULL, `app_name` varchar(128) DEFAULT NULL COMMENT 'app_name', `content` longtext NOT NULL, `md5` varchar(32) DEFAULT NULL, `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP, `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP, `src_user` text, `src_ip` varchar(50) DEFAULT NULL, `op_type` char(10) DEFAULT NULL, `tenant_id` varchar(128) DEFAULT '' COMMENT '租户字段', PRIMARY KEY (`nid`), KEY `idx_gmt_create` (`gmt_create`), KEY `idx_gmt_modified` (`gmt_modified`), KEY `idx_did` (`data_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='多租户改造'; /******************************************/ /* 数据库全名 = nacos_config */ /* 表名称 = tenant_capacity */ /******************************************/ CREATE TABLE `tenant_capacity` ( `id` bigint(20) unsigned NOT NULL AUTO_INCREMENT COMMENT '主键ID', `tenant_id` varchar(128) NOT NULL DEFAULT '' COMMENT 'Tenant ID', `quota` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '配额,0表示使用默认值', `usage` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '使用量', `max_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个配置大小上限,单位为字节,0表示使用默认值', `max_aggr_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '聚合子配置最大个数', `max_aggr_size` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '单个聚合数据的子配置大小上限,单位为字节,0表示使用默认值', `max_history_count` int(10) unsigned NOT NULL DEFAULT '0' COMMENT '最大变更历史数量', `gmt_create` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间', `gmt_modified` datetime NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='租户容量信息表'; CREATE TABLE `tenant_info` ( `id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'id', `kp` varchar(128) NOT NULL COMMENT 'kp', `tenant_id` varchar(128) default '' COMMENT 'tenant_id', `tenant_name` varchar(128) default '' COMMENT 'tenant_name', `tenant_desc` varchar(256) DEFAULT NULL COMMENT 'tenant_desc', `create_source` varchar(32) DEFAULT NULL COMMENT 'create_source', `gmt_create` bigint(20) NOT NULL COMMENT '创建时间', `gmt_modified` bigint(20) NOT NULL COMMENT '修改时间', PRIMARY KEY (`id`), UNIQUE KEY `uk_tenant_info_kptenantid` (`kp`,`tenant_id`), KEY `idx_tenant_id` (`tenant_id`) ) ENGINE=InnoDB DEFAULT CHARSET=utf8 COLLATE=utf8_bin COMMENT='tenant_info'; CREATE TABLE `users` ( `username` varchar(50) NOT NULL PRIMARY KEY, `password` varchar(500) NOT NULL, `enabled` boolean NOT NULL ); CREATE TABLE `roles` ( `username` varchar(50) NOT NULL, `role` varchar(50) NOT NULL, UNIQUE INDEX `idx_user_role` (`username` ASC, `role` ASC) USING BTREE ); CREATE TABLE `permissions` ( `role` varchar(50) NOT NULL, `resource` varchar(255) NOT NULL, `action` varchar(8) NOT NULL, UNIQUE INDEX `uk_role_permission` (`role`,`resource`,`action`) USING BTREE ); INSERT INTO users (username, password, enabled) VALUES ('nacos', '$2a$10$EuWPZHzz32dJN7jexM34MOeYirDdFAZm2kuWj7VEOJhhZkDrxfvUu', TRUE); INSERT INTO roles (username, role) VALUES ('nacos', 'ROLE_ADMIN');2.2.下载nacosnacos在GitHub上有下载地址:https://github.com/alibaba/nacos/tags,可以选择任意版本下载。本例中才用1.4.1版本:2.3.配置Nacos将这个包解压到任意非中文目录下,如图:目录说明:bin:启动脚本conf:配置文件进入nacos的conf目录,修改配置文件cluster.conf.example,重命名为cluster.conf:然后添加内容:127.0.0.1:8845 127.0.0.1.8846 127.0.0.1.8847然后修改application.properties文件,添加数据库配置spring.datasource.platform=mysql db.num=1 db.url.0=jdbc:mysql://127.0.0.1:3306/nacos?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC db.user.0=root db.password.0=1232.4.启动将nacos文件夹复制三份,分别命名为:nacos1、nacos2、nacos3 然后分别修改三个文件夹中的application.properties,nacos1:server.port=8845nacos2:server.port=8846nacos3:server.port=8847然后分别启动三个nacos节点:startup.cmd2.5.nginx反向代理找到课前资料提供的nginx安装包: 解压到任意非中文目录下: 修改conf/nginx.conf文件,配置如下:upstream nacos-cluster { server 127.0.0.1:8845; server 127.0.0.1:8846; server 127.0.0.1:8847; } server { listen 80; server_name localhost; location /nacos { proxy_pass http://nacos-cluster; } }而后在浏览器访问:http://localhost/nacos即可。代码中application.yml文件配置如下:spring: cloud: nacos: server-addr: localhost:80 # Nacos地址2.6.优化实际部署时,需要给做反向代理的nginx服务器设置一个域名,这样后续如果有服务器迁移nacos的客户端也无需更改配置.Nacos的各个节点应该部署到多个不同服务器,做好容灾和隔离 -